Mastering Data Virtualization: Strategies, Tools, and Best Practices

Ananya Arora

May 9, 2024

Introduction to Data Virtualization

Data virtualization is a modern data integration technique that allows organizations to create a unified, real-time view of their data without physically moving or replicating it. By abstracting data from various sources and presenting it as a single virtual layer, data virtualization enables users to seamlessly access and combine data from disparate systems, regardless of location or format.

Unlike traditional data integration methods, such as ETL (Extract, Transform, Load) or data warehousing, data virtualization does not require the physical movement of data. Instead, it leverages a virtual data layer between the data sources and the consuming applications, providing a unified interface for accessing and querying data in real time. This approach offers significant advantages in agility, flexibility, and cost-effectiveness.

This comprehensive blog post will explore the fundamental concepts, benefits, tools, and best practices of data virtualization. We will examine how data virtualization differs from other data integration approaches and discuss its role in enabling modern data architectures such as logical data warehouses and data fabrics.

Whether you are a data architect, IT professional, or business decision-maker, understanding data virtualization is crucial in today’s data-driven landscape. By the end of this blog post, you will have a solid grasp of data virtualization and its potential to transform your organization’s data management strategies.

Understanding Data Virtualization

At the core of data virtualization lies the concept of virtual data. Virtual data is a logical representation of data not physically stored in a single location but dynamically accessed and combined from various sources on demand. This virtual data layer acts as an abstraction layer, hiding the complexity and heterogeneity of the underlying data sources from the consuming applications.

Data virtualization techniques and technologies enable the creation and management of this virtual data layer. Some key components of data virtualization include:

- Data Connectors: Data virtualization platforms provide various connectors to various data sources, such as databases, data warehouses, cloud storage, APIs, and more. These connectors enable seamless access to data regardless of its location or format.

- Data Modeling and Mapping: Data virtualization tools offer potent data modeling and mapping capabilities. They allow you to define logical data models that represent the unified view of your data, irrespective of the physical data structures. These models can be created using familiar concepts like tables, views, and relationships, making it easier for users to understand and work with the virtualized data.

- Query Optimization: Data virtualization platforms employ advanced query optimization techniques for efficient and fast data retrieval. They can analyze and optimize queries in real time, pushing down processing to the source systems when possible and minimizing data movement. This optimization ensures users can access and query the virtualized data with minimal latency.

- Caching and Performance Optimization: Data virtualization tools often incorporate caching mechanisms to enhance performance further. Frequently accessed or computationally intensive data can be cached in memory or a high-performance storage layer, reducing the need for repetitive data retrieval and processing. This caching helps deliver faster response times and improves scalability.

- Data Security and Governance: Data virtualization platforms provide robust security and governance features. They allow you to define and enforce access controls, data masking, and data lineage at the virtual data layer. This ensures that data is accessed only by authorized users and in compliance with data governance policies.

By leveraging these techniques and technologies, data virtualization enables organizations to create a unified, real-time view of their data landscape, breaking down data silos and enabling agile data integration and analysis.

Benefits of Data Virtualization

Implementing data virtualization solutions offers numerous strategic advantages to organizations. Some of the key benefits include:

- Agility and Flexibility: Data virtualization enables agile data integration by allowing organizations to quickly combine and access data from disparate sources without needing physical data movement. This agility is particularly valuable when data needs to be integrated from multiple systems or new data sources must be incorporated rapidly.

- Faster Time-to-Insights: With data virtualization, users can access and query data in real time without the delays associated with traditional ETL processes. This enables faster decision-making and allows organizations to respond quickly to changing business needs and market dynamics.

- Reduced Data Movement and Replication: Data virtualization minimizes the need for data movement and replication, allowing users to access data directly from the source systems. This reduces the costs and complexities associated with data storage and maintenance and the risks of data inconsistencies and errors arising from data duplication.

- Simplified Data Landscape: Data virtualization simplifies the data landscape by creating a unified virtual data layer and abstracts the complexity of the underlying data sources. This makes it easier for users to discover, understand, and work with data, regardless of origin or format.

- Enhanced Data Governance and Security: Data virtualization platforms provide centralized data governance and security features. They allow organizations to define and enforce data access controls, data masking, and data lineage at the virtual data layer, ensuring that data is accessed securely and complies with regulatory requirements.

- Cost Savings: Data virtualization can significantly reduce the costs associated with data integration and management. Organizations can save on storage and infrastructure costs by eliminating the need for physical data movement and replication. Additionally, the agility and flexibility provided by data virtualization can lead to faster time-to-market and improved operational efficiency, resulting in further cost savings.

- Enablement of Advanced Analytics: Data virtualization enables organizations to easily combine and access data from various sources, including structured, semi-structured, and unstructured data. This empowers users to perform advanced analytics, such as data mining, predictive modeling, and machine learning, on a unified view of the data, leading to deeper insights and better decision-making.

By leveraging the power of data virtualization, organizations can unlock the full potential of their data assets, drive innovation, and gain a competitive edge in today’s data-driven world.

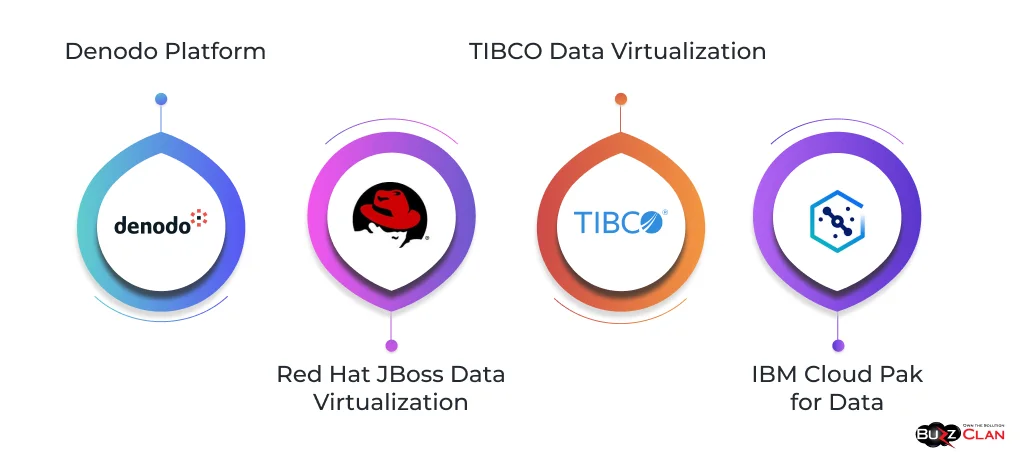

Data Virtualization Tools and Platforms

Organizations can choose from various powerful tools and platforms to effectively implement data virtualization. Some of the leading data virtualization tools include:

- Denodo Platform: Denodo is a comprehensive data virtualization platform that provides advanced data integration, abstraction, and real-time access capabilities. It offers various connectors, supports various data sources, and includes data modeling, query optimization, and caching features. Denodo’s platform is known for its scalability, performance, and ease of use.

- Red Hat JBoss Data Virtualization: JBoss Data Virtualization is an open-source solution that allows organizations to create a unified view of their data across disparate sources. It provides a virtual data layer that enables real-time data access, integration, and transformation. JBoss Data Virtualization supports many data sources and offers features like data federation, caching, and security.

- TIBCO Data Virtualization: TIBCO offers a robust data virtualization platform that enables organizations to create a unified view of their data landscape. It provides a virtual data layer that allows users to access and combine data from various sources in real time. TIBCO Data Virtualization includes data modeling, data transformation, query optimization, and robust security and governance capabilities.

- IBM Cloud Pak for Data: IBM Cloud Pak for Data is an integrated data and AI platform with data virtualization capabilities. It allows organizations to connect to and integrate data from various sources, create a unified data view, and enable real-time data access and analysis. IBM Cloud Pak for Data offers a user-friendly interface, advanced analytics capabilities, and seamless integration with other IBM tools and services.

When comparing data virtualization tools, it’s important to consider factors such as the range of supported data sources, ease of use, performance and scalability, security and governance features, and integration with existing data management tools and platforms. Organizations should evaluate their specific data integration requirements, IT infrastructure, and budget to select the tool that best aligns with their needs.

Implementing Data Virtualization

Implementing a data virtualization solution involves several key steps to ensure a successful deployment. Here’s a general approach to implementing data virtualization effectively:

| Steps | Description |

|---|---|

| Define Business Requirements | Define the business requirements and objectives for data virtualization. Identify the data sources that need to be integrated, the target consumers of the virtualized data, and the specific use cases and analytics requirements. |

| Assess Data Landscape | Conduct a thorough assessment of your organization's current data landscape. Identify the various data sources, their formats, locations, and existing data integration processes. This assessment will help understand the complexity and scope of data virtualization implementation. |

| Select Data Virtualization Platform | Based on the business requirements and the assessment of the data landscape, select a suitable data virtualization platform. Consider the platform's capabilities, scalability, performance, security features, and compatibility with your existing data management tools and infrastructure. |

| Design Virtual Data Layer | Design the virtual data layer by creating logical data models that represent the unified view of your data. Define the data entities, relationships, and transformations required to combine and present the data from different sources. Collaborate with business stakeholders and data consumers to ensure the virtual data models meet their needs. |

| Configure Data Connectors | Set up the necessary data connectors to connect the data virtualization platform and the various data sources. Configure the connectors to extract data from databases, data warehouses, cloud storage, APIs, and other relevant sources. |

| Implement Data Security and Governance | Implement data security and governance measures within the data virtualization platform. Define data access controls, data masking rules, and data lineage to ensure that data is accessed securely and complies with data governance policies. |

| Optimize Performance | Optimize the performance of the data virtualization solution by leveraging query optimization techniques, caching mechanisms, and data compression. Monitor and tune the performance regularly to ensure optimal response times and scalability. |

| Test and Validate | Thoroughly test and validate the data virtualization implementation. Verify that the virtualized data is accurate, consistent, and accessible as expected. Conduct performance testing to ensure the solution can handle the required data volumes and concurrent users. |

| Deploy and Monitor | Deploy the data virtualization solution into production environments. Establish monitoring and alerting mechanisms to identify and resolve any issues or performance bottlenecks proactively. Continuously monitor the solution to ensure its reliability, availability, and performance. |

| Train and Educate Users | Provide training and education to the users of the virtualized data. Help them understand how to access and query the virtualized data effectively. Encourage collaboration and knowledge sharing among data consumers to maximize the value of the data virtualization implementation. |

Common challenges in data virtualization implementation

These include managing complex data source integrations, ensuring data quality and consistency, optimizing query performance, and addressing data security and compliance requirements. To overcome these challenges, it’s essential to follow best practices such as:

- Conducting thorough data profiling and quality assessments

- Implementing robust data governance processes

- Leveraging query optimization techniques and caching mechanisms

- Regularly monitoring and tuning performance

- Collaborating closely with business stakeholders and data consumers

By following these steps and best practices, organizations can successfully implement data virtualization and unlock the full potential of their data assets.

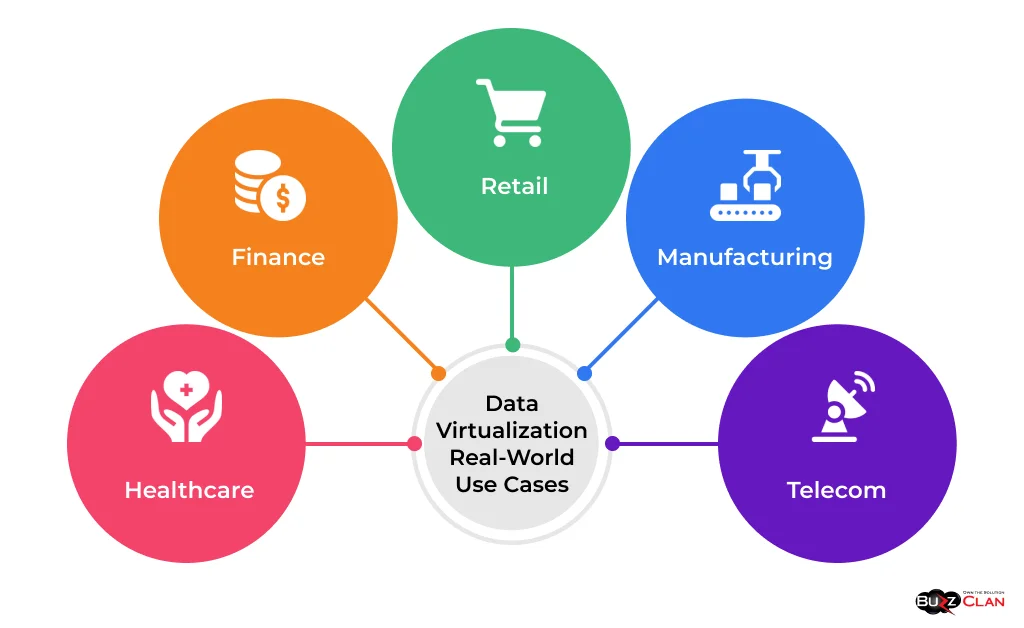

Data Virtualization Use Cases

Data virtualization finds applications across various industries, enabling organizations to effectively integrate and leverage their data assets. Here are some real-world use cases showcasing the power of data virtualization:

- Healthcare: In the healthcare industry, data virtualization enables the integration of patient data from disparate sources, such as electronic health records (EHRs), clinical trials, and medical imaging systems. By creating a unified view of patient data, healthcare providers can better understand patient health, improve clinical decision-making, and enhance patient outcomes.

- Finance: Financial institutions leverage data virtualization to integrate data from various systems, including core banking systems, trading platforms, and customer relationship management (CRM) systems. By virtualizing financial data, organizations can gain real-time visibility into customer portfolios, risk exposure, and compliance requirements, enabling faster decision-making and improved risk management.

- Retail: Retailers use data virtualization to combine data from multiple channels, such as in-store transactions, e-commerce platforms, and social media interactions. By creating a unified view of customer data, retailers can gain insights into customer behavior, preferences, and purchasing patterns, enabling personalized marketing campaigns and improved customer experience.

- Manufacturing: Manufacturing companies leverage data virtualization to integrate data from various systems, such as production systems, supply chain management systems, and quality control systems. By virtualizing manufacturing data, organizations can optimize production processes, reduce downtime, and improve product quality, increasing operational efficiency and cost savings.

- Telecommunications: Telecom companies use data virtualization to integrate data from billing, network performance monitoring, and customer service systems. By virtualizing telecom data, providers can gain real-time insights into network usage, customer behavior, and service quality, enabling proactive issue resolution and improved customer satisfaction.

Case studies of successful data virtualization implementations further highlight the transformative power of this technology. For example, a global financial institution implemented a data virtualization solution to integrate data from over 200 disparate sources, enabling real-time risk analysis and regulatory reporting. The solution significantly reduced data integration costs and improved data accessibility for business users.

Another example is a healthcare provider that used data virtualization to integrate patient data from multiple EHR systems and clinical research databases. By creating a unified view of patient data, the provider was able to identify potential drug interactions, improve clinical trial recruitment, and enhance patient care coordination.

These use cases and case studies demonstrate the versatility and value of data virtualization across industries, showcasing its ability to break down data silos, enable real-time data access, and drive data-driven decision-making.

Data Virtualization vs Other Technologies

Data virtualization is often compared to other data integration technologies, such as data federation, data mesh, and traditional ETL (Extract, Transform, Load) processes. Let’s explore the differences and similarities between these approaches:

- Data federation focuses on providing a unified view of data from multiple sources, similar to data virtualization. However, data federation typically involves querying the data sources directly and combining the results without creating a separate virtual data layer.

- Data virtualization, on the other hand, creates an abstracted virtual data layer that sits between the data sources and the consuming applications. This virtual layer allows more advanced data transformation, caching, and performance optimization.

- Data mesh is an architectural approach emphasizing decentralized data ownership and governance. It promotes treating data as a product and enabling domain-driven data management.

- Data virtualization can be used within a data mesh architecture to create a unified data view across different domains. It allows for integrating and accessing data from various domain-specific data products, enabling cross-domain analysis and insights.

- ETL processes involve extracting data from source systems, transforming it, and loading it into a target system, such as a data warehouse. They are typically used for batch data integration and require physical data movement.

- Data virtualization, in contrast, does not physically move the data. It creates a virtual data layer, allowing real-time data access and integration without requiring extensive data movement and transformation.

Scenarios where data virtualization is the preferred solution include:

- Real-time data access and integration requirements

- Need for agility and flexibility in integrating new data sources

- Situations where physical data movement is not feasible or desirable

- Complex data landscapes with multiple disparate data sources

- Requirements for self-service data access and exploration

On the other hand, ETL processes are more suitable for scenarios involving large-scale data transformation and historical data analysis, where data latency is acceptable and physical data movement is necessary for performance or regulatory reasons.

Data virtualization can also complement other data integration approaches. For example, it can be used with ETL processes to enable real-time data access while leveraging the transformed data in the target systems.

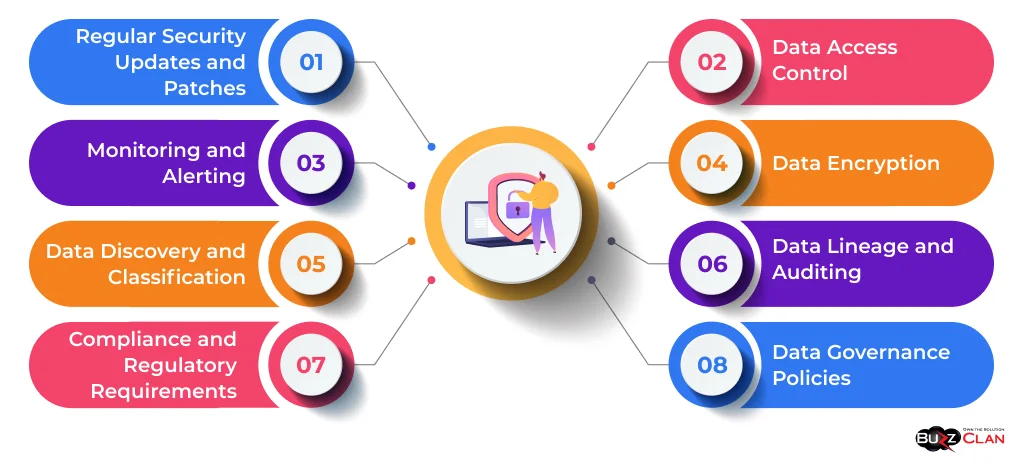

Data Virtualization Security and Governance

Data security and governance are critical considerations when implementing data virtualization. Here are some strategies and best practices for ensuring data security and compliance in a virtualized environment:

- Implement robust access control mechanisms within the data virtualization layer to ensure only authorized users can access sensitive data.

- Use role-based access control (RBAC) to define and enforce granular access privileges based on user roles and responsibilities.

- Implement data masking and redaction techniques to protect sensitive data elements and comply with data privacy regulations.

- Encrypt sensitive data at rest and in transit to prevent unauthorized access and protect data confidentiality.

- Use industry-standard encryption algorithms and secure communication protocols to safeguard data as it moves between the data virtualization layer and the consuming applications.

- Maintain a clear record of data lineage, tracing the origin, movement, and transformation of data across the virtualized environment.

- Implement auditing mechanisms to track and log data access, modifications, and usage, enabling accountability and facilitating compliance reporting.

- Establish clear data governance policies and procedures that define data ownership, stewardship, and usage guidelines.

- Ensure that data virtualization practices align with the organization’s overall data governance framework, including data quality, metadata management, and data lifecycle management.

- Understand and adhere to relevant industry-specific regulations and data privacy laws, such as GDPR, HIPAA, and PCI DSS, when implementing data virtualization.

- Conduct regular compliance audits and assessments to ensure the virtualized environment meets regulatory requirements.

- Implement data discovery and classification mechanisms to identify and categorize sensitive data within the virtualized environment.

- Use data classification tags and metadata to apply appropriate security controls and access restrictions based on the data’s sensitivity and criticality.

- Monitor the virtualized environment for potential security breaches, unauthorized access attempts, and anomalous activities.

- Set up automated alerts and notifications to promptly detect and respond to security incidents, minimizing the impact of data breaches.

- Keep the data virtualization platform and associated components updated with the latest security patches and updates.

- Regularly assess and address any vulnerabilities or security weaknesses in the virtualized environment to maintain a robust security posture.

By implementing these security and governance strategies, organizations can ensure data confidentiality, integrity, and availability within the virtualized environment. Collaborating closely with security teams, compliance officers, and data governance experts is essential to develop and enforce comprehensive security and governance policies tailored to the organization’s specific requirements.

Future Trends in Data Virtualization

As the data landscape continues to evolve and businesses face new challenges in managing and leveraging their data assets, data virtualization is poised to play an increasingly critical role. Here are some emerging trends and innovations that are shaping the future of data virtualization:

| Trends and Innovations | Description |

|---|---|

| Cloud-Based Data Virtualization: | The growing adoption of cloud computing drives the shift towards cloud-based data virtualization solutions. |

| Cloud platforms offer scalability, flexibility, and cost-efficiency, enabling organizations to deploy and manage data virtualization more agile and cost-effectively. | |

| Cloud-based data virtualization allows seamless integration with cloud data sources, such as cloud data warehouses, cloud data lakes, and SaaS applications. | |

| Real-Time Data Integration: | The demand for real-time data access and analytics is increasing, requiring data virtualization solutions to support low-latency data integration. |

| Advancements in streaming technologies and real-time data processing frameworks enable data virtualization platforms to handle high-velocity data streams and deliver near-instantaneous insights. | |

| AI and Machine Learning Integration: | Integrating artificial intelligence (AI) and machine learning (ML) capabilities into data virtualization platforms is emerging as a key trend. |

| AI and ML techniques can be leveraged to automate data discovery, data profiling, and data quality assessments, enhancing the efficiency and accuracy of data virtualization processes. | |

| AI-powered recommendations and intelligent data matching algorithms can assist in identifying relationships between data entities and suggesting optimal data integration strategies. | |

| Self-Service Data Virtualization: | The trend towards self-service data access and exploration drives the development of user-friendly and intuitive data virtualization interfaces. |

| Self-service data virtualization allows business users and data analysts to easily discover, access, and combine data from various sources without heavy reliance on IT teams. | |

| Visual data modeling, drag-and-drop data integration, and natural language query capabilities enable non-technical users to leverage data virtualization effectively. | |

| Hybrid and Multi-Cloud Data Virtualization: | As organizations adopt hybrid and multi-cloud strategies, data virtualization solutions evolve to support seamless data integration across different cloud platforms and on-premises systems. |

| Hybrid data virtualization allows for the unified access and integration of data from both cloud and on-premises sources, enabling organizations to leverage the benefits of cloud computing while maintaining control over sensitive data. | |

| Edge Data Virtualization: | With the proliferation of Internet of Things (IoT) devices and edge computing, data virtualization extends to the edge. |

| Edge data virtualization enables the integration and processing of data from IoT sensors and edge devices in real-time, reducing latency and enabling faster decision-making. | |

| Data virtualization at the edge allows for efficient data aggregation, filtering, and analysis, optimizing bandwidth utilization and enabling edge analytics. |

As these trends continue to shape the data virtualization landscape, organizations must stay informed and adapt their strategies accordingly. By embracing innovative data virtualization technologies and approaches, businesses can unlock new opportunities for data-driven insights, agility, and competitive advantage.

Data Virtualization in Specific Industries

Data virtualization finds extensive applications across various industries, each with unique challenges and opportunities. Let’s explore how data virtualization is used in some specific sectors:

- In healthcare, data virtualization enables patient data integration from disparate sources, such as electronic health records (EHRs), clinical trial databases, and medical imaging systems.

- Data virtualization allows healthcare providers to create a unified view of patient data, facilitating personalized medicine, improved clinical decision-making, and enhanced patient outcomes.

- Challenges in healthcare data virtualization include ensuring data privacy and compliance with regulations like HIPAA, managing complex healthcare data models, and integrating data from legacy systems.

- Financial institutions leverage data virtualization to integrate data from various sources, including core banking systems, trading platforms, risk management systems, and customer relationship management (CRM) systems.

- Data virtualization enables real-time access to financial data, facilitating faster decision-making, improved risk assessment, and enhanced customer service.

- Challenges in financial data virtualization include handling large volumes of transactional data, ensuring data security and compliance with regulations like PCI DSS and GDPR, and integrating data from siloed systems.

- Retailers use data virtualization to integrate data from multiple channels, such as brick-and-mortar stores, e-commerce platforms, social media, and customer loyalty programs.

- Data virtualization allows retailers to gain a 360-degree view of customers, enabling personalized marketing, optimized inventory management, and improved supply chain efficiency.

- Challenges in retail data virtualization include managing diverse data formats, integrating data from external partners and suppliers, and handling large volumes of customer and transactional data.

- Telecom companies leverage data virtualization to integrate data from various sources, including billing systems, network performance monitoring systems, and customer service platforms.

- Data virtualization enables telecom providers to gain real-time insights into network usage, customer behavior, and service quality, facilitating proactive issue resolution and improved customer experience.

- Challenges in telecom data virtualization include handling large volumes of streaming data, ensuring data privacy and security, and integrating data from legacy systems and network infrastructure.

While the specific use cases and challenges may vary across industries, the fundamental benefits of data virtualization remain consistent. Data virtualization empowers organizations to make data-driven decisions, optimize operations, and deliver superior customer experiences by enabling real-time data access, integration, and analysis.

Organizations must consider industry-specific data models, regulatory requirements, and best practices to adopt data virtualization in specific industries successfully. Collaborating with industry experts, technology partners, and data virtualization solution providers can help navigate the unique challenges and maximize the value of data virtualization in each sector.

Data Virtualization Best Practices

To maximize the benefits of data virtualization and ensure successful implementation, organizations should adhere to the following best practices:

| Best Practices | Description |

|---|---|

| Define Clear Business Objectives | Clearly define the business objectives and use cases for data virtualization. |

| Align data virtualization initiatives with the organization's overall data strategy and business goals. | |

| Identify the key stakeholders and data consumers who will benefit from virtualized data access. | |

| Assess Data Landscape and Requirements | Conduct a thorough assessment of the organization's data landscape, including data sources, formats, and volumes. |

| Identify the data integration and access requirements of different business units and user groups. | |

| Evaluate the data virtualization solution's scalability, performance, and security needs. | |

| Establish Data Governance Framework | Develop a robust data governance framework that defines data ownership, stewardship, and quality standards. |

| Establish data access controls, security measures, and compliance policies for the virtualized environment. | |

| Implement data lineage and auditing mechanisms to ensure data traceability and accountability. | |

| Design Optimal Data Models | Create logical data models representing the unified data view across different sources. |

| Optimize data models for performance, scalability, and ease of use. | |

| Collaborate with business stakeholders and data consumers to ensure data models meet their requirements. | |

| Leverage Caching and Performance Optimization | Implement caching mechanisms to improve query performance and reduce latency. |

| Optimize query execution by pushing down processing to source systems when possible. | |

| Monitor and tune performance regularly to ensure optimal response times and resource utilization. | |

| Ensure Data Quality and Consistency | Implement data quality checks and validation rules to ensure the accuracy and consistency of virtualized data. |

| Establish data cleansing and enrichment processes to handle data inconsistencies and improve data quality. | |

| Monitor and measure data quality metrics to promptly identify and address issues. | |

| Provide Self-Service Capabilities | Enable self-service data access and exploration for business users and analysts. |

| Provide intuitive user interfaces and data discovery tools for easy access and analysis. | |

| Offer users training and support to ensure effective data virtualization solution utilization. | |

| Collaborate with IT and Business Teams | Foster close collaboration between IT teams and business stakeholders throughout the data virtualization implementation. |

| Involve business users in designing and testing virtualized data models and access mechanisms. | |

| Establish clear communication channels and feedback loops to address issues or requirements promptly. | |

| Plan for Scalability and Future Growth | Design the data virtualization architecture to accommodate future growth and scalability requirements. |

| Consider the potential increase in data volumes, user concurrency, and data source diversity | |

| Regularly assess and optimize the data virtualization infrastructure to ensure long-term performance and reliability. | |

| Continuously Monitor and Improve | Implement monitoring and alerting mechanisms to identify and resolve any issues or performance bottlenecks proactively. |

| Regularly collect user feedback and assess the effectiveness of the data virtualization solution. | |

| Continuously iterate and improve the data virtualization implementation based on user requirements and emerging best practices. |

By following these best practices, organizations can effectively implement and leverage data virtualization to unlock the full potential of their data assets, drive innovation, and gain a competitive edge in today’s data-driven landscape.

Conclusion: The Transformative Power of Data Virtualization

Throughout this blog post, we have explored the key concepts, benefits, tools, and best practices of data virtualization. We have seen how data virtualization differs from traditional data integration approaches, offering agility, flexibility, and cost-efficiency in managing and leveraging enterprise data.

Data virtualization has numerous benefits, including faster time to insights, improved data governance and security, reduced data movement and replication, and enhanced data agility. It empowers organizations to respond quickly to changing business needs, gain a holistic view of their data landscape, and enable self-service data access for business users.

As organizations navigate the challenges of big data, cloud computing, and real-time analytics, data virtualization has become an indispensable tool in their data management arsenal. The future of data virtualization is bright, with emerging trends such as cloud-based solutions, AI and machine learning integration, and self-service capabilities shaping its evolution.

Organizations must follow best practices to implement data virtualization and realize its transformative potential successfully. These include defining clear business objectives, establishing robust data governance frameworks, designing optimal data models, and fostering collaboration between IT and business teams.

As data grows in volume, variety, and velocity, the importance of data virtualization will only continue to increase. Organizations that embrace data virtualization and invest in the necessary skills, tools, and processes will be well-positioned to unlock the full value of their data assets, drive innovation, and gain a competitive advantage in the digital age.

Suppose your organization wants to embark on a data virtualization journey or optimize your existing data virtualization practices. In that case, partnering with experienced data virtualization experts and solution providers is crucial. By leveraging their knowledge and expertise, you can navigate the complexities of data virtualization and implement a solution that aligns with your unique business requirements and goals.

The transformative power of data virtualization is undeniable. By embracing this technology and adopting a data-driven mindset, organizations can turn their data into a strategic asset, drive smarter decisions, and achieve unprecedented agility, efficiency, and innovation. The future belongs to those who can harness the full potential of their data, and data virtualization is the key to unlocking that potential.

FAQs

Get In Touch